MES Software: Vendors, Features & Costs Compared 2026

MES software compared: vendors, functions per VDI 5600, costs (cloud vs. on-premise) and implementation. Honest market overview 2026.

TL;DR: The often-cited "world-class OEE of 85 %" is a useful orientation — but very few plants sustain it. The global average across discrete manufacturing is 55–65 %. In pharma packaging, 70 % can be excellent. In high-volume automotive, 80 % is realistic. The number depends entirely on industry, product mix, automation level, and — critically — how you measure. The most dangerous benchmark mistake: comparing manual Excel OEE to automatically measured OEE. Manual values are systematically 8–12 percentage points higher than reality. Before you benchmark against anyone else, establish your own accurate baseline first.

Table of contents

Empirical studies and large-scale analyses consistently show the same pattern: the gap between "world-class" claims and factory-floor reality is enormous.

| OEE range | Classification | What it means in practice |

|---|---|---|

| < 40 % | First digitalization stage | Typical when automatic measurement starts and real losses become visible for the first time (Evocon 2023) |

| 40–55 % | Below average | Significant improvement potential. Common in brownfield plants with high manual share. |

| 55–70 % | Industry average | Where most discrete manufacturers land with honest, automatic measurement (Evocon, OEE.com) |

| 70–80 % | Advanced | Structured improvement programs in place. Best-in-class for process industries (SCW.ai: pharma world-class ≈ 70 %) |

| 80–85 % | World-class | Sustained only by the top 5–10 % of plants. High automation, stable product mix, mature TPM (Sage Clarity: best-in-class ≈ 82.5 %) |

| > 85 % | Exceptional — or suspect | Possible on single-product, fully automated lines. If across a whole plant: verify the measurement methodology. |

Sources: Evocon (2023, 50+ countries), Sage Clarity / Epicor (2021, cross-industry), SCW.ai (2022, pharma), OEE.com / LeanProduction.com, MDCplus (2023).

The same OEE value means completely different things depending on the production environment. A packaging line at 70 % in pharma may outperform an automotive press at 80 % — because the pharma line runs validated processes with mandatory cleaning cycles, batch changeovers, and regulatory holds that consume planned time by design.

| Industry | Typical OEE range | World-class | Key limiting factor |

|---|---|---|---|

| Automotive (stamping, welding, assembly) | 65–80 % | ≥ 85 % | Setup time, tool wear, variant complexity |

| Pharma (packaging, filling) | 50–70 % | ≥ 70 % | GMP validation, cleaning, batch changeovers |

| Food & beverage (FMCG lines) | 55–75 % | ≥ 80 % | Cleaning cycles, perishable materials, seasonal demand |

| Plastics (injection molding) | 60–80 % | ≥ 85 % | Cycle time consistency, tool maintenance |

| Metal processing (CNC, forming) | 50–70 % | ≥ 75 % | High-mix/low-volume, setup dominance |

| Electronics / assembly | 55–75 % | ≥ 80 % | Manual intervention, test station bottlenecks |

The 5 factors that make benchmarks incomparable:

Insight from 15,000+ connected machines: Manually captured OEE values are systematically 8–12 percentage points higher than automatically measured values. In the first 1–2 weeks after switching to automatic capture, the displayed OEE drops by 15–20 percentage points — not because production gets worse, but because measurement becomes accurate for the first time.

This "OEE drop" is the strongest signal that data collection is working. Real losses — micro-stops under 30 seconds, unrecorded short downtimes, optimistic cycle time assumptions — become visible for the first time. At Neoperl, automatic measurement revealed that 4 PLC alarm codes caused 80 % of all machine stops — a pattern invisible in manual tracking.

The practical consequence for benchmarking: Never compare your newly measured automatic OEE to industry benchmarks derived from mixed manual/automatic data. Your first real baseline will look worse than expected. That is correct. Start improving from there.

| # | Rule | Why it matters |

|---|---|---|

| 1 | Establish your own baseline first | External benchmarks are meaningless without your own reliable data. Measure automatically for 4+ weeks before comparing. |

| 2 | Compare within context | Only compare similar processes, product families, and automation levels. Cross-industry benchmarks mislead. |

| 3 | Set differentiated targets | One plant-wide OEE target is useless. Define targets per line, product family, or shift. A stamping press and a packaging line have different physics. |

| 4 | Focus on the trend, not the number | A plant improving from 52 % to 62 % in 6 months is outperforming a plant stable at 75 %. The rate of improvement matters more than the absolute value. |

| 5 | Translate gaps into action | The value of benchmarks is not the number — it is identifying where and why losses occur. Use Pareto analysis on availability, performance, and quality losses separately. |

Meleghy Automotive — cross-plant benchmarking done right: With SYMESTIC deployed across 6 plants in 4 countries (DE, CZ, HU, ES), Meleghy uses a single data model with identical downtime categories, capture thresholds, and target cycle times. This makes OEE values comparable across Wilnsdorf, Gera, Brandýs, Bernsbach, Reinsdorf, and Miskolc — enabling real best-practice transfer. Without this standardization, the 10 % stoppage reduction and 7 % output improvement would have been invisible between plants.

| Limitation | What goes wrong | What to do instead |

|---|---|---|

| False precision | High OEE from narrow product range or relaxed quality thresholds — not real efficiency | Always check what's behind the number |

| Measurement inconsistency | Manual vs. automatic data, different stop thresholds, varying cycle time definitions | Standardize definitions before comparing |

| Blind spots | Planning delays, logistics, workforce shortages fall outside OEE | Supplement with TEEP, OTD, lead time |

| Over-optimization risk | Maximizing speed without considering quality inflates OEE temporarily | Track quality losses separately |

| No economic context | 90 % OEE on an unprofitable product is still a loss | Combine OEE with contribution margin / machine hour |

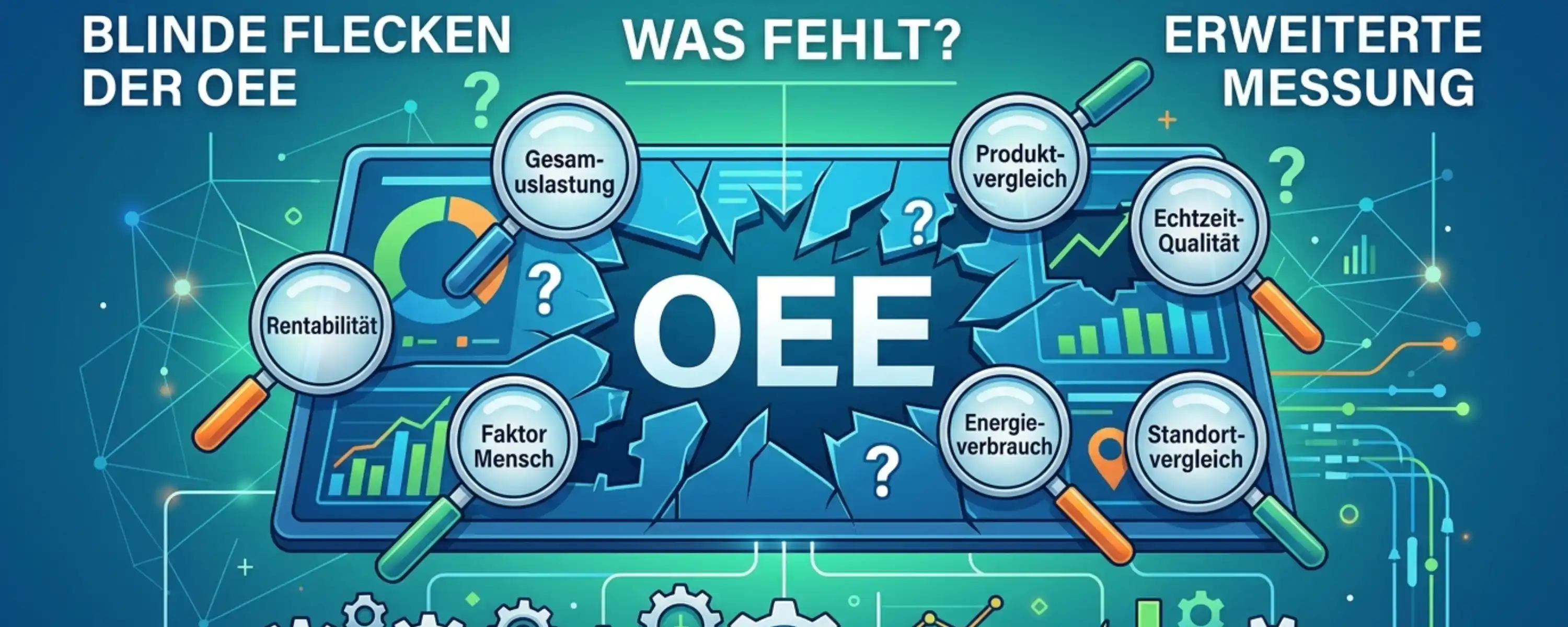

For a deep dive into all 7 systematic blind spots of OEE: OEE Limits: 7 Blind Spots of the Metric.

What is a good OEE value?

It depends on industry, product mix, and automation level. For discrete manufacturing, 55–70 % is the global average. 70–80 % is advanced. Above 80 % is world-class — sustained only by the top 5–10 % of plants. In pharma, 70 % can already be excellent due to regulatory constraints.

Is 85 % OEE realistic?

On single-product, fully automated lines — yes. As a plant-wide average across multiple products and lines — rarely sustained. Most publications citing 85 % as "world-class" refer to a theoretical ideal, not a widespread reality. The global average is closer to 60 %.

Why does my OEE drop when I switch to automatic measurement?

Because manual OEE is systematically 8–12 percentage points too high. Micro-stops, short downtimes, and optimistic cycle time assumptions are invisible in manual tracking. Automatic measurement captures everything — and the first accurate baseline looks worse. That is correct. Improve from there.

Can I compare OEE across plants?

Only if downtime categories, capture thresholds, target cycle times, and measurement methods are identical. Without standardization, apparent performance differences between plants are artifacts of definition, not reality. A cloud-native MES with a unified data model makes this operationally feasible.

How often should I update OEE benchmarks?

Annually at minimum. When new technologies (AI-based maintenance, adaptive scheduling) are introduced, benchmarks shift. Internal benchmarks should be reviewed quarterly alongside the improvement roadmap.

The key takeaway: OEE benchmarks are useful when interpreted honestly. The 85 % "world-class" target is a direction, not a destination for most plants. What matters more: accurate measurement, honest baselines, context-appropriate targets, and — above all — sustained improvement over time. A plant improving from 52 % to 62 % is more impressive than one stable at 78 %.

→ What is OEE? · → OEE Formula · → Improve OEE · → OEE Limits · → TEEP, OAE & OLE · → OEE Software

MES software compared: vendors, functions per VDI 5600, costs (cloud vs. on-premise) and implementation. Honest market overview 2026.

OEE software captures availability, performance & quality automatically in real time. Vendor comparison, costs & case studies. 30-day free trial.

MES (Manufacturing Execution System): Functions per VDI 5600, architectures, costs and real-world results. With implementation data from 15,000+ machines.